Combining the strengths of both Xenics and Telops technologies in the field of infrared imaging and camera systems lead to innovative and powerful solutions.

We would like to share our infrared technology knowledge with you, such that together we can find new and interesting infrared applications.

On this page you find more information about infrared as a technology, its history, infrared detectors and an infrared glossary.

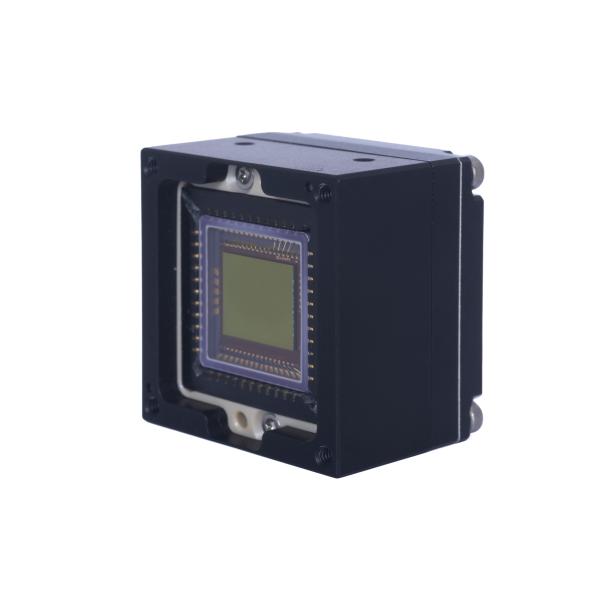

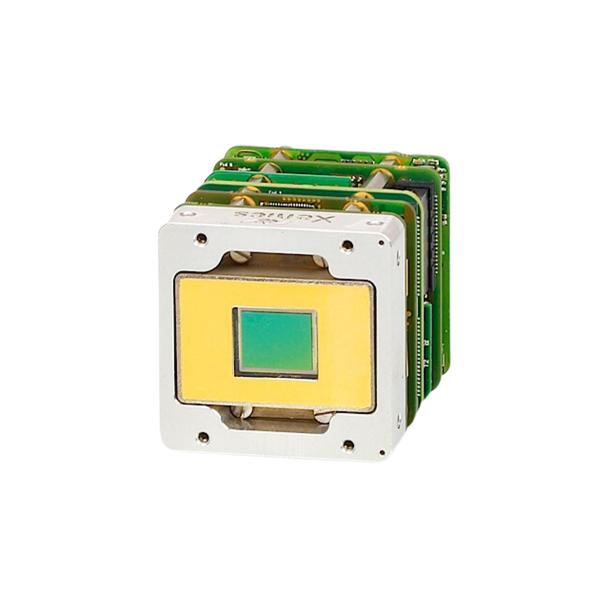

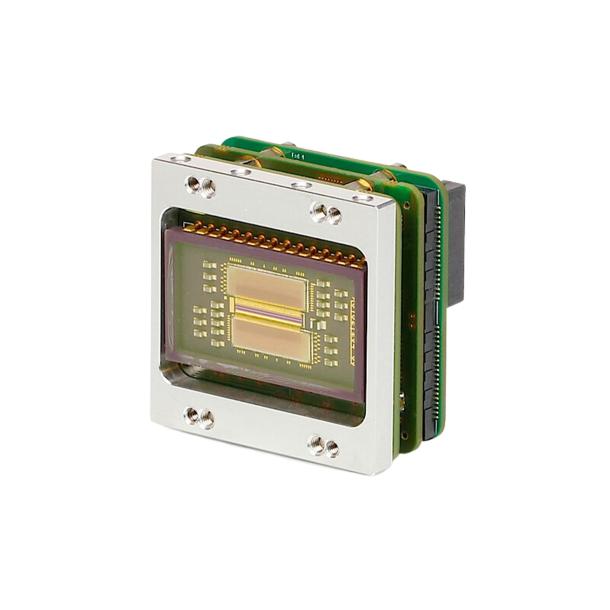

Xenics is a designer and manufacturer of infrared sensors, cores and cameras that deliver unparalleled Electro-Optical performance and functionalities.

Telops designs and manufactures high-performance hyperspectral imaging systems and infrared cameras for defense, industrial, and academic research applications.

Infrared as a technology

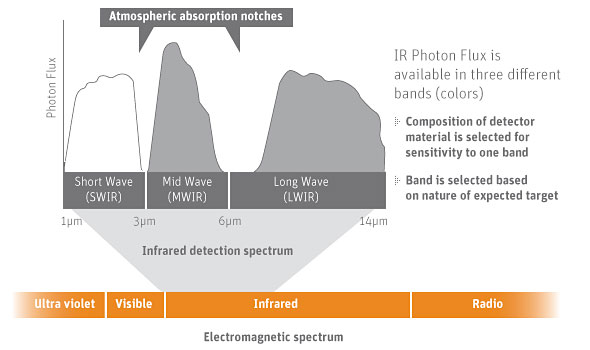

As the Latin prefix “infra” means “below” or “beneath”, “infrared” refers to the region beyond or beneath the red end of the visible color spectrum. The infrared region is located between the visible and microwave regions of the electromagnetic spectrum. All objects radiate some energy in the infrared, even objects at room temperature and frozen objects such as ice. Therefore infrared is often referred to as the heat region of the spectrum.

History of Infrared Detectors

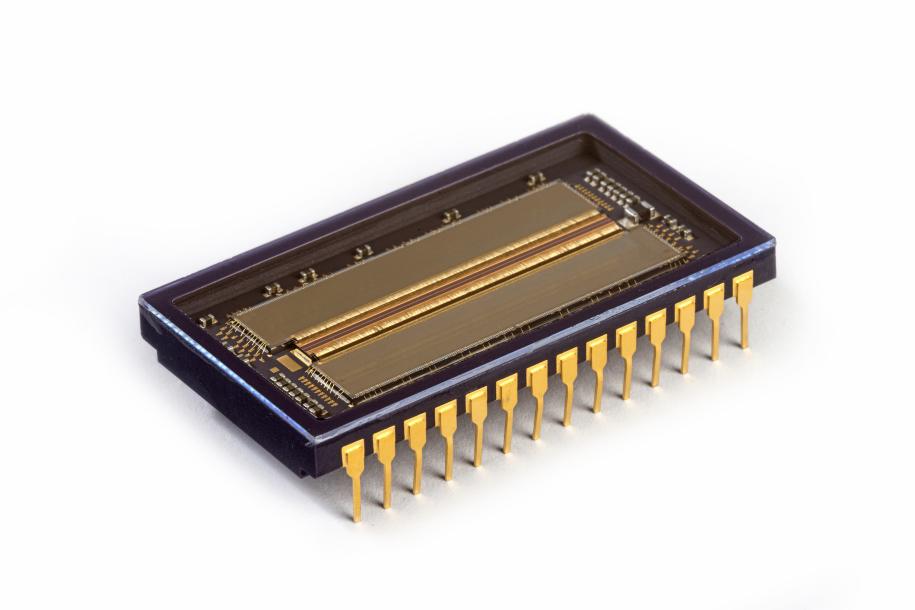

The history of infrared started in the 19th century. In these days, the development of infrared detectors was mainly focused on thermometers and bolometers. Photon detectors were only developed in the 1940’s and improved the sensitivity and response time. During the 1940’s and 50’s new materials were developed for IR sensing, of which the semiconductor alloys of chemical table group III-V, IV-VI and II-VI were the most important ones. These alloys allowed the bandgap of the semiconductor to be custom tailored for a specific application.

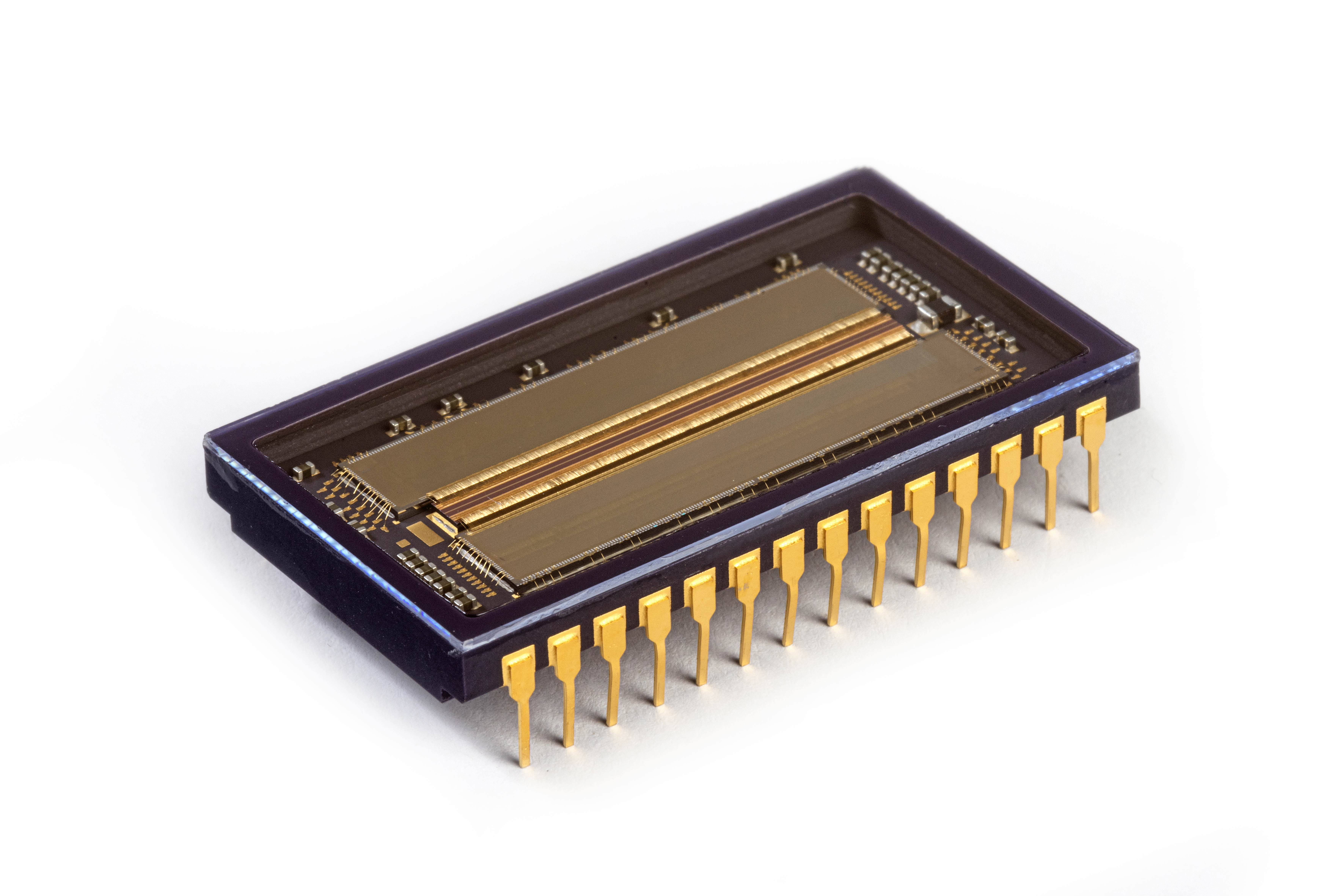

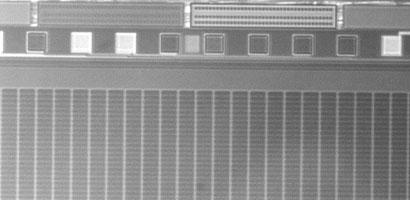

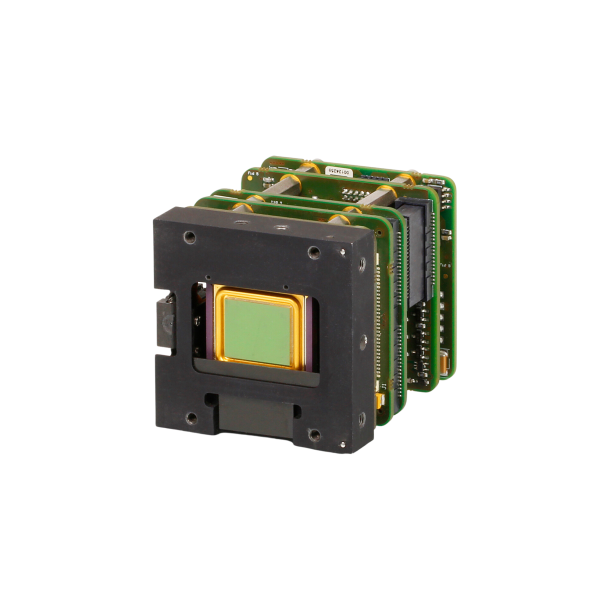

In the late 1960’s and early 1970’s, “first generation” linear arrays were developed. In the meantime Charge Coupled Devices (CCDs) were invented which made it possible to envision “second generation” detector arrays. These were eventually born in the 1990’s and provided large 2D arrays and linear arrays with square and rectangular pixels.

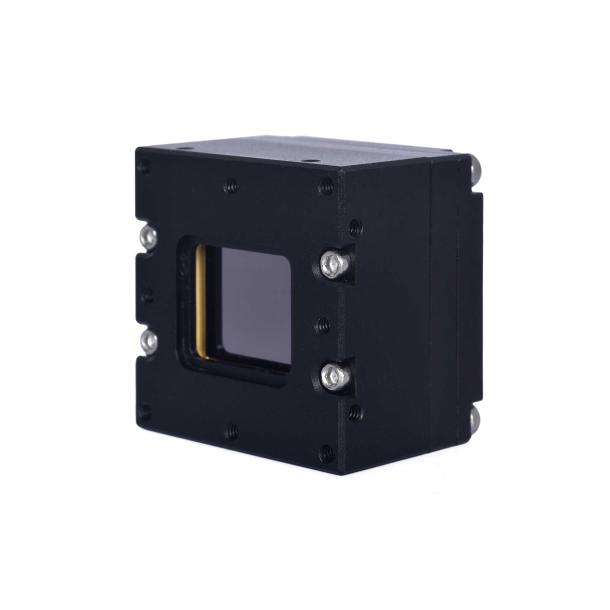

Infrared Detector Types

An infrared detector is simply a transducer of radiant energy, converting radiant energy in the infrared into a measurable form. Infrared detectors can be used for a variety of applications in the military, scientific, industrial, medical, security and automotive arenas. They are available as single element detectors in circular, rectangular, cruciform, and other geometries. As linear arrays, and as 2D focal plane arrays.

Infrared detectors can be made from many compound semiconductors, each with its own characteristics.

Infrared Glossary

The infrared world is a high-tech world with a lot of typical infrared jargon. Here you can find an overview of some of the most recurring terms. Next to this infrared dictionary, you can also find a list of infrared acronyms.

Infrared technologies

See all

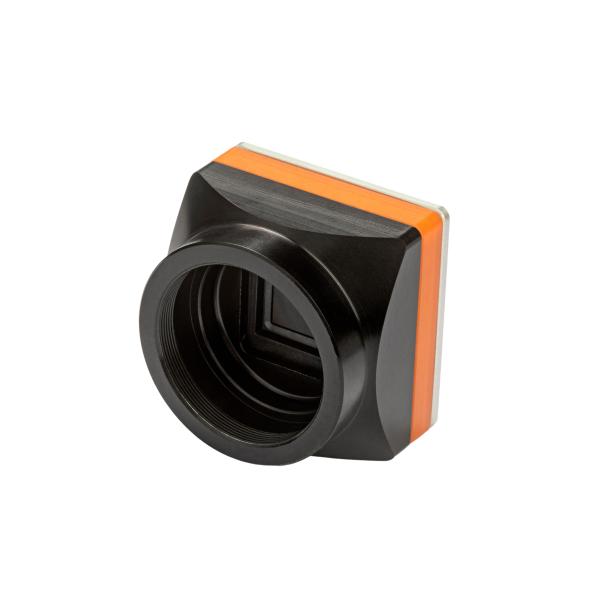

Shortwave Infrared technology

Shortwave Infrared (SWIR) technology has emerged as a powerful imaging tool, offering unique capabilities that complement Longwave Infrared (LWIR) and Midwave Infrared (MWIR) systems.

Midwave Infrared technology

Midwave Infrared (MWIR) technology plays a crucial role in thermal imaging, capturing radiation emitted directly from objects without the need for an external light source.

Longwave Infrared technology

For decades, infrared cameras utilizing Longwave Infrared (LWIR) and Midwave Infrared (MWIR) sensors have been a staple in military applications for detecting human activity.

Products 25

See all exosens products

What's new in Infrared technology?

See all

KINTEX Exhibition Center Ⅱ.

FROM Sep 25th 2024 TO Sep 28th 2024

DX KOREA 2024

Visit Exosens at DX KOREA 2024 from September 25 to 28 in KINTEX Exhibition Center Ⅱ, Korea

Shanghai New International Expo Centre.

FROM Jul 08th 2024 TO Jul 10th 2024

Vision China 2024

Visit Exosens at Vision China 2024 from July 8 to 10 in Shanghai New International Expo Centre, China

Paris Nord Villepinte exhibition centre.

FROM Jun 17th 2024 TO Jun 21st 2024

Eurosatory 2024

Visit Exosens at Eurosatory 2024 from June 17 to 21 in Paris Nord Villepinte exhibition centre, France